Secure Apache Airflow Using Customer Security Manager

Leverage a human proxy to auto log users in airflow web console

Background

Apache Airflow is an open source orchestration tool to programmatically author, schedule and monitor workflows. Square uses it as a critical infrastructure managing ETL jobs to aggregate metrics for executive dashboards, product analysis, and machine learning.

Square runs the Apache Airflow in a multi-tenancy environment. Different teams may share the same airflow cluster running their dags.

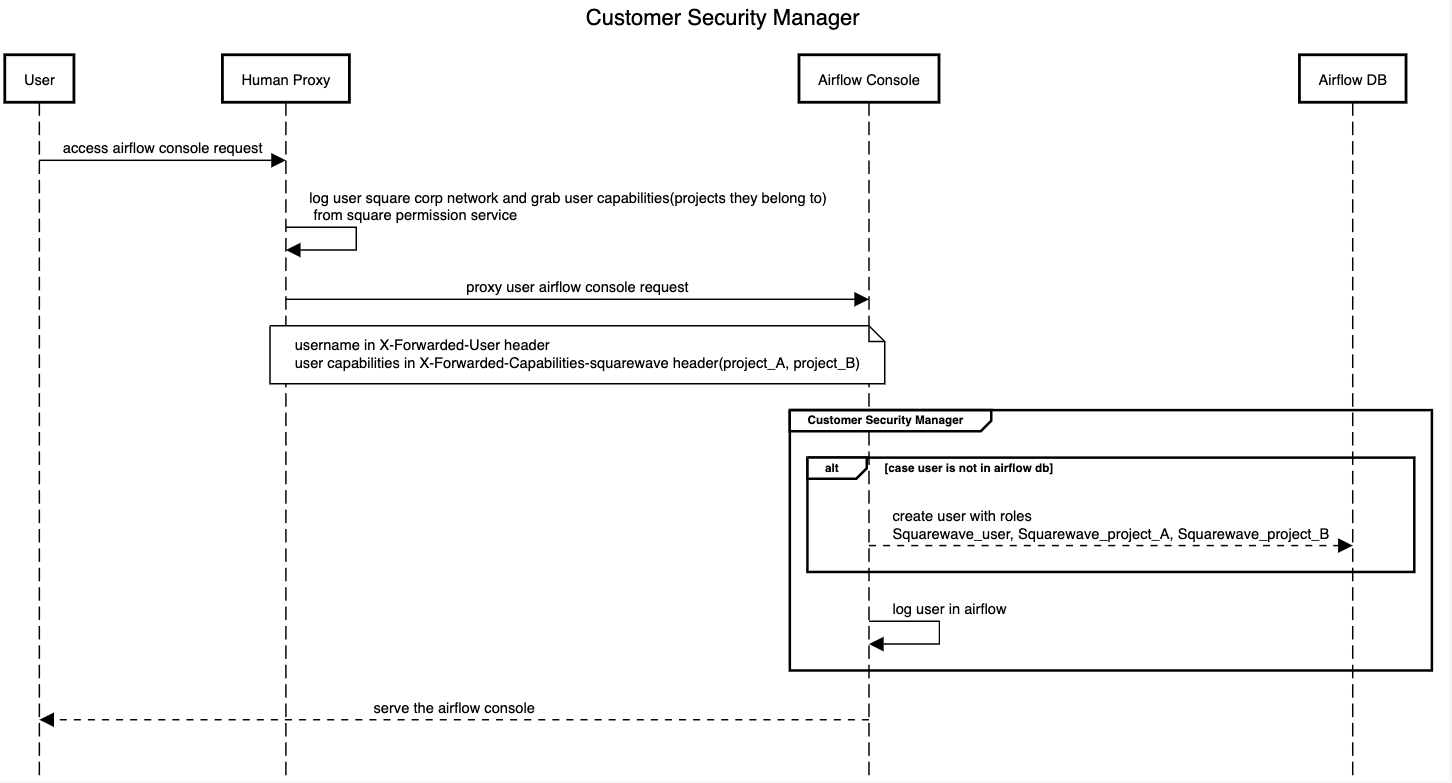

Also, Square has a human proxy sitting between Square users and the airflow web console. The human proxy will log square users in, grab user identities and capabilities from other Square systems, and pass the information in HTTP headers when proxying the requests to airflow web console.

Security Goals

We want to have an auth strategy(authN and authZ) to distinguish our users from administrators and other users from other teams in the airflow console and have DAG level permission control. For example, you do not want users from a different team who share the same Airflow cluster with you to delete your DAGs accidentally.

Also, we want to automatically login users in the airflow web console based on the HTTP headers from the human proxy instead of requiring users to re-enter their username and password since users have been already logged in by the human proxy.

Finally, we do not want to have a job syncing square user info and permissions with airflow because it will add delay on permission sync and new user provisioning and become a maintenance burden. I saw a few posts from other companies using this approach.

A Short Summary About Square Security Requirements

- Users have basic READ permissions on Task, DAG, DAGRun.

- Users cannot see some UI components like admin menu to configure pools, connections and security menu to create users, roles, permissions, etc.

- Users only have

WRITEpermissions on the DAGs they own. - Admin has all permissions.

- Airflow web console can read user identities and capabilities from Square human proxy and auto the user in.

- Prefer not to have an offline batch syncing permissions between Square and Airflow.

How Security Works in Airflow

Before we talk about our proposal, let’s take a look at how Airflow security works.

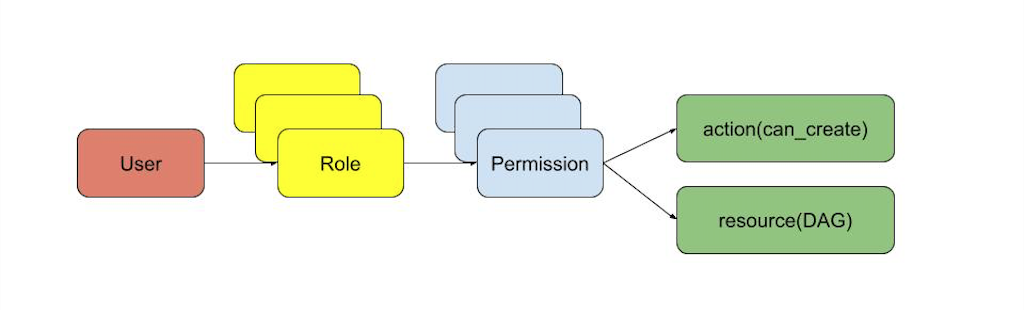

Since 1.10, Airflow has built the role based access control(rbac) of the web console on top of Flask AppBuilder(F.A.B). A user can have multiple roles and different users can share the same role. Each role can have multiple permissions. Each permission allows the user an action against a resource. In the graph above, the permission grants the user the ability to create DAGs.

This security model gives us a lot of flexibility to manage user permissions. For example, we can allow a user being able to clear/rerun a task, but not being able to configure connections and pools. This allows us to achieve requirements 1 & 2.

Airflow provided 5 default roles with predefined permissions - Admin, Public, User, Public, Viewer ad Op. Airflow allows Admin to create Customer Roles allowing you to put the permissions you want under the role. This allows us to achieve requirement 3.

Airflow also allows you to have DAG level roles. So you can only allow project owners and Admin to edit the DAGs under a project. This allows us to achieve requirement 3.

In addition, Airflow provides an interface to allow users define their own SECURITY_MANAGER_CLASS. You can define your own auth logic in your security manager. More details about customer security managers can be found here. This allows us to achieve requirements 5 & 6.

Proposal

Now let’s talk about our strategy. We override airflow default security manager which will grab the username and projects the user owns from HTTP headers from the human proxy and create the user with proper roles if not already in the database and log the user in.

Roles and Permissions

We tell whether a user is an admin or not and what projects they own from the HTTP headers provided by Square Human Proxy when proxy the request to airflow.

Admin

The admin user will have the airflow default Admin role.

Users

- All users will have a

basic_user_rolewhich allows users to do basic operations like viewing the airflow web console. Ourbasic_user_rolehas most of the permissions under default User role. - Users will have project roles based on the projects users own. Using the sequence diagram above for example, the user will have

project_A_roleandproject_B_role. We use project role to achieve DAG level access control.

DAG Level Access Control

We will add the access_control section for DAGs to only allow the owners modify the DAG. In the example below, only project A’s owners will be able to edit this DAG. Other users can only read this DAG.

dag = DAG(

dag_id="project_A_dag",

default_args=DEFAULT_ARGS,

schedule_interval="0 0 * * * *",

access_control={

"project_A_role": {"can_dag_read", "can_dag_edit"},

"basic_user": {"can_dag_read"},

}

)

Implementations

Customer Security Manager

Airflow auth is built on top of F.A.B which supports the 5 auth types below. More details about auth in F.A.B can be found here.

- Database: username and password style that is queried from the database to match. Passwords are kept hashed on the database.

- OAuth: Authentication using OAUTH (v1 or v2). You need to install authlib.

- OpenID: Uses the user’s email field to authenticate on Gmail, Yahoo etc…

- LDAP: Authentication against an LDAP server, like Microsoft Active Directory.

REMOTE_USER: Reads theREMOTE_USERweb server environ var, and verifies if it’s authorized with the framework users table. It’s the web server's responsibility to authenticate the user, useful for intranet sites, when the server (Apache, Nginx) is configured to use kerberos, no need for the user to login with username and password on F.A.B.

We think REMOTE_USER is best fit for our use case. All we need to do is provide our own authremoteuserview to the security manager. You can see we grab the info from HTTP headers, create a user if it does not exist in airflow DB and log the user in in SqAuthRemoteUserView.

class SqAuthRemoteUserView(AuthView):

@expose("/login/")

def login(self):

if g.user is not None and g.user.is_authenticated:

logging.info(f"{g.user.username} already logged in")

return redirect(self.appbuilder.get_url_for_index)

username = self.get_username_from_header()

self.get_or_create_user(username)

user = self.appbuilder.sm.auth_user_remote_user(username)

login_user(user)

session.pop("_flashes", None)

return redirect(self.appbuilder.get_url_for_index)

def get_or_create_user(self, username):

user = self.appbuilder.sm.find_user(username)

if user is None:

user = self.appbuilder.sm.add_user(

username,

username,

"-", # dummy last name

f"{username}@squareup.com",

self.get_or_create_roles_from_headers(),

"password", # dummy password

)

return user

class SqSecurityManager(AirflowSecurityManager):

authremoteuserview = SqAuthRemoteUserView

Configuration Change

In webserver_config.py, put AUTH_TYPE as AUTH_REMOTE_USER and pass your customer security manager class to SECURITY_MANAGER_CLASS.

from flask_appbuilder.security.manager import AUTH_REMOTE_USER

from sq.lib.sq_security_manager import SqSecurityManager

# The authentication type

# AUTH_OID : Is for OpenID

# AUTH_DB : Is for database

# AUTH_LDAP : Is for LDAP

# AUTH_REMOTE_USER : Is for using REMOTE_USER from web server

# AUTH_OAUTH : Is for OAuth

AUTH_TYPE = AUTH_REMOTE_USER

SECURITY_MANAGER_CLASS = SqSecurityManager

In airflow.cfg, enable rbac.

rbac = True

The conclusion

We proposed a way to use the customer security manager to secure airflow with a human proxy in this article. The approach is pretty flexible and you can tailor it to your needs by changing the logic in your customer security manager.

That is the end! Square Data Team is hiring as always. If you are excited about our purpose of economic empowerment and building state of art data infra, come and join us!