Dessa: Open sourcing Atlas

Tools for applied deep learning development

This is a post from Dessa, an applied deep learning company that became part of the Square ecosystem in February 2020.

Just a month after joining Square, today we’re excited to announce the open source launch of Atlas, a tool for developing applied deep learning projects.

What is Atlas?

Atlas helps machine learning practitioners take projects from 0 to 100 fast, with features that make it easy to run, evaluate and deploy thousands of experiments concurrently. We first built Atlas for ourselves, when few tools existed for tackling some of the bigger challenges specific to deep learning:

- Successful deep learning projects require large-scale experimentation. Without the right tools, it is time-consuming and difficult to accurately track experiments.

- The lack of tools for experiment management meant that a lot of this work was done ad hoc, making it hard to collaborate and reproduce model results.

- Deep learning requires lots of compute, and we found ourselves spending lots of time rigging up the right infrastructure—valuable time that could have been spent experimenting!

These challenges prevented us from getting the full impact we knew our deep learning projects could have, and we were sure that other practitioners were facing the same kinds of roadblocks.

Last July, we released the first version of Atlas to the public, which has since been downloaded by thousands of machine learning practitioners. By open-sourcing the code, we’re excited to invite users to contribute to making Atlas a tool that makes time to results with deep learning even faster.

Features

Atlas is a flexible machine learning tool that consists of a Python SDK, CLI, GUI & Scheduler.

SDK:

# main.py

Import foundations

# <Your training code here>

NUM_JOBS = 100

# Randomly sample hyperparameters to be tested

def sample_hyperparameters(all_params):

all_params['lstm_units'] = [int(np.random.choice([512, 768, 1024]))] * 2

all_params['zoneout'] = [float(np.random.choice([0.05, 0.1, 0.2]))] * 2

...

return all_params

# A loop that calls the foundatons deploy method for different combinations of hyperparameters

for _ in range(NUM_JOBS):

all_params = sample_hyperparameters(all_params)

foundations.submit(

env="my_personal_aws_instance",

job_dir="experiments/fraud_detection_mini",

entrypoint="code/driver.py",

ram=512,

num_gpus=1,

project_name="Fraud Detection",

params=all_params

)

CLI:

$ foundations deploy --env=my_remote_instance --entrypoint=main.py

GUI:

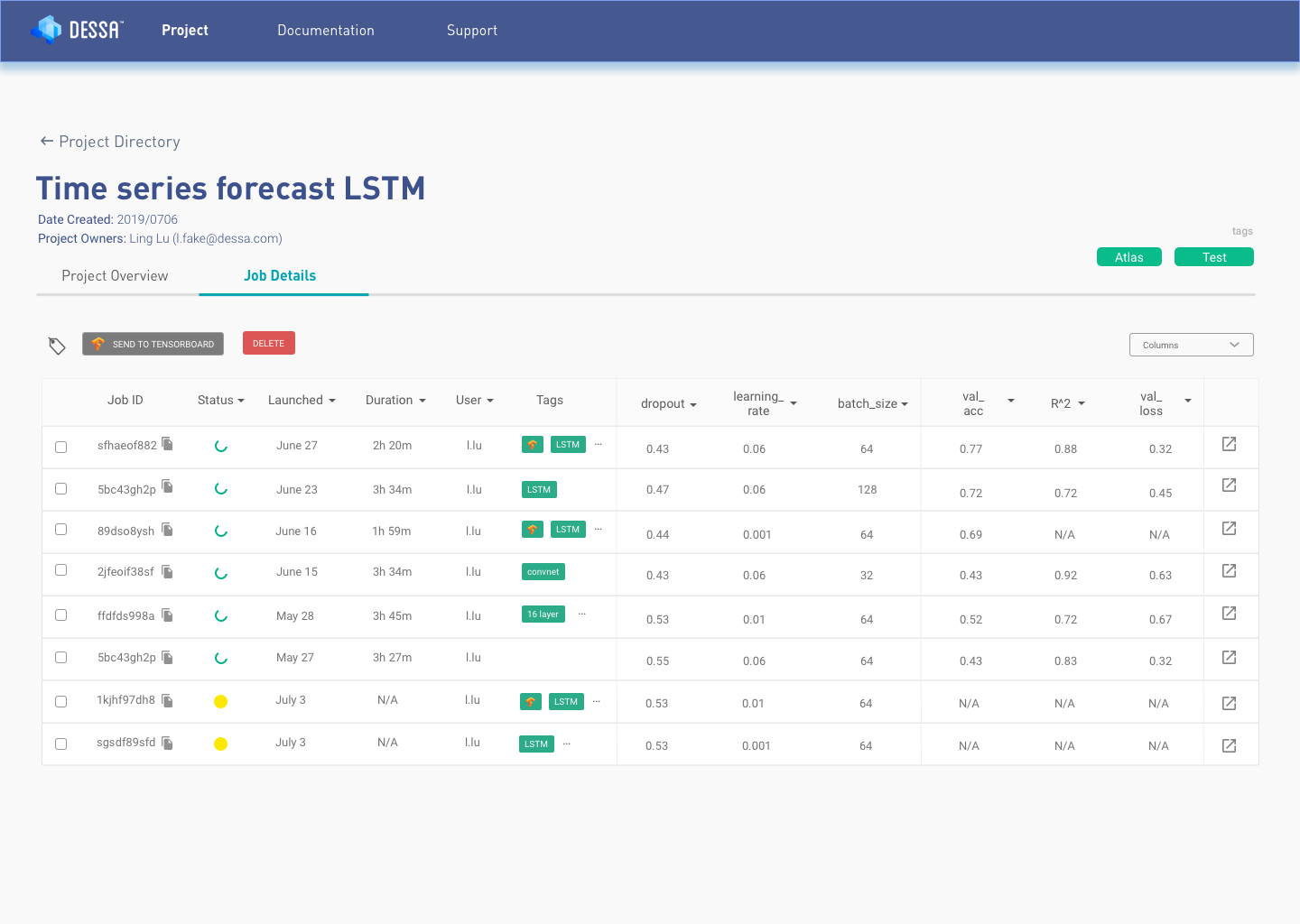

- Job scheduling: Atlas’ job scheduler handles experiment management for you, running 1000s of experiments concurrently and asynchronously.

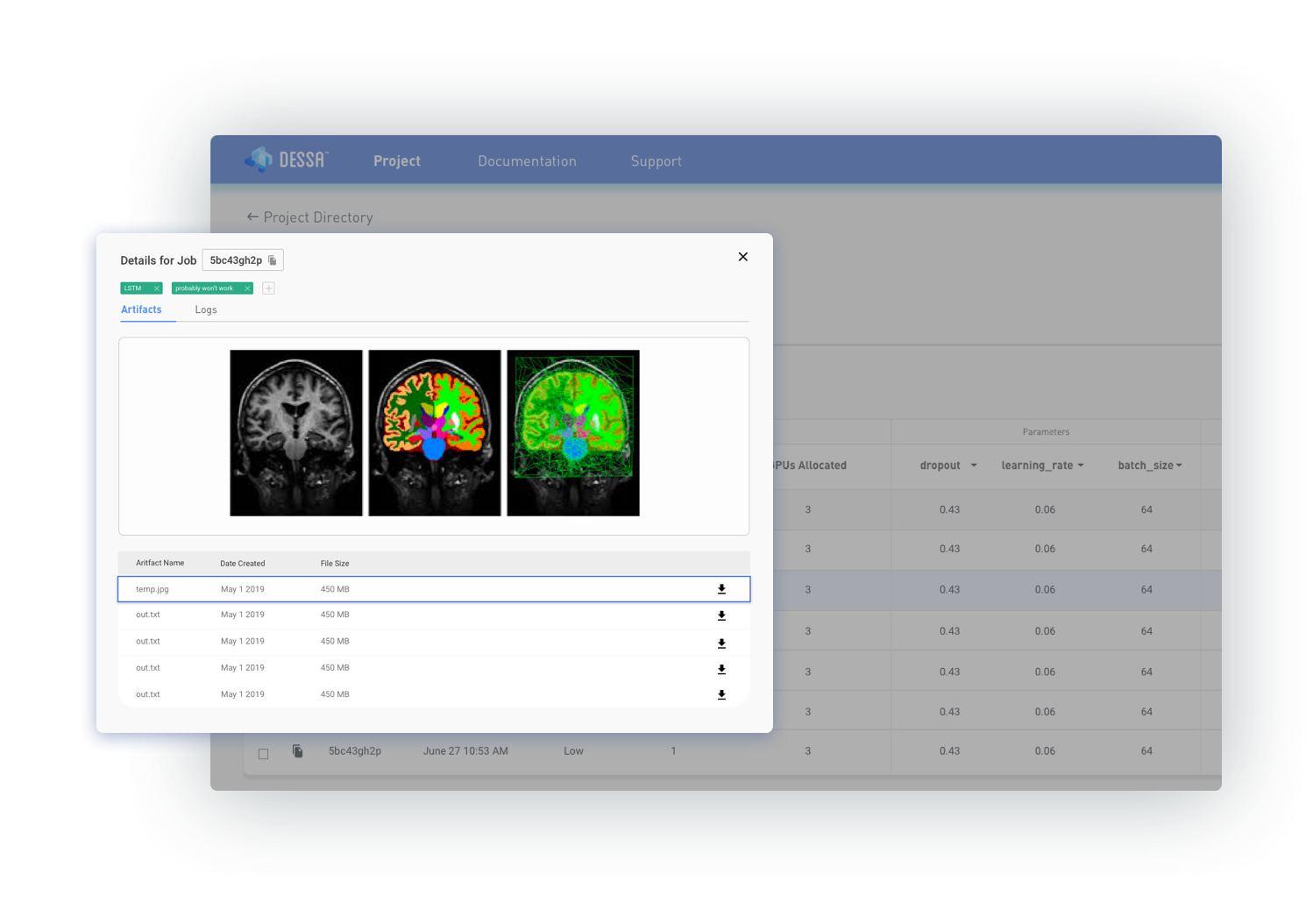

- Experiment management & tracking: Tag experiments and easily track hyperparameters, metrics, and artifacts such as images, GIFs, and audio clips in a web-based dashboard.

- Reproducibility: Every job run is recorded and tracked using a unique job ID, making it easy to reproduce and share any experiment.

- Python SDK: An easy-to-use SDK makes Atlas compatible with any machine learning library or framework. The SDK also allows you to do hyper-parameter optimization runs programmatically.

- Built-in Tensorboard integration: Directly compare multiple job runs on Tensorboard within the Atlas GUI.

- Keycloak integration: Set up your authentication server easily and manage access to your DL cluster with Atlas’ built-in Keycloak integration.

- Self-hosted: Run Atlas on a single node e.g. your laptop, or multi-node cluster e.g. on-premise servers or cloud clusters (AWS/GCP/etc.)

Get started

Find the Atlas repository on Github and read the docs to install it on your local machine. We’ve also included an image segmentation tutorial to make it easy to get started.

Contribution

Now that Atlas is open source, we’re excited for external contributions to the code! If you’re interested in creating a PR, follow the guidelines here.

Get in touch

Have a question about Atlas or want to chat more about how to contribute? We would love to chat! Join our community Slack and get in touch with our machine learning engineering team directly.